ChatGPT is the rising tide that lifted all AI tools. Many rely on it every day, including product managers. They use ChatGPT to:

- Research the market, competition, and trends

- Create user personas and ideal customer profiles (ICPs)

- Organize feature ideas

- Analyze user feedback

- Prioritize feature requests

- Brainstorm user experience optimization ideas

- Create project plans

- Write support documentation and product release materials

- And more

However, it often gives false information that defeats the tool’s purpose.

For example, you might ask ChatGPT to analyze a collection of customer chats and find feature requests. You can’t rely on this tool if it misses some or makes up non-existent feature requests.

Eight AI experts shared their ChatGPT misinformation stories with us. They also gave tips for preventing it. Spoiler – you should:

- Clarify your prompts

- Manually check the information it gives you

- Try specialized AI tools for product managers

We’re sharing seven tips and ten prompts to train GPT to provide only accurate information. But first, let’s dig into ChatGPT and its limitations.

Key takeaways

- ChatGPT doesn’t lie on purpose. It fills gaps in its knowledge with confident-sounding guesses.

- When accuracy really matters, switch to Thinking mode. It cuts factual errors by 50 to 80% compared to standard responses.

- Prompt quality matters more than most people realize. Give ChatGPT a role, background context, and ask for citations.

- Go one question at a time. Iterative prompting with course corrections produces more reliable output.

- For product work, purpose-built AI tools tend to be more accurate. They’re trained on your data, not the entire internet.

Why does ChatGPT give wrong information?

Let’s go back to the beginning. What’s ChatGPT in plain English?

OpenAI built an app called ChatGPT in 2022. They used AI (artificial intelligence) to teach their LLM (large language model) to answer your questions. Today, it can also write copy, create images, analyze and translate text, explain concepts, and more. OpenAI took a common chatbot and improved it. That’s the “chat” part of it.

“GPT” stands for generative pre-trained transformer. Today, ChatGPT runs on the GPT-5 family. GPT-5.3 Instant handles everyday tasks for all users. GPT-5.4 Thinking is available on paid plans for complex or high-stakes work.

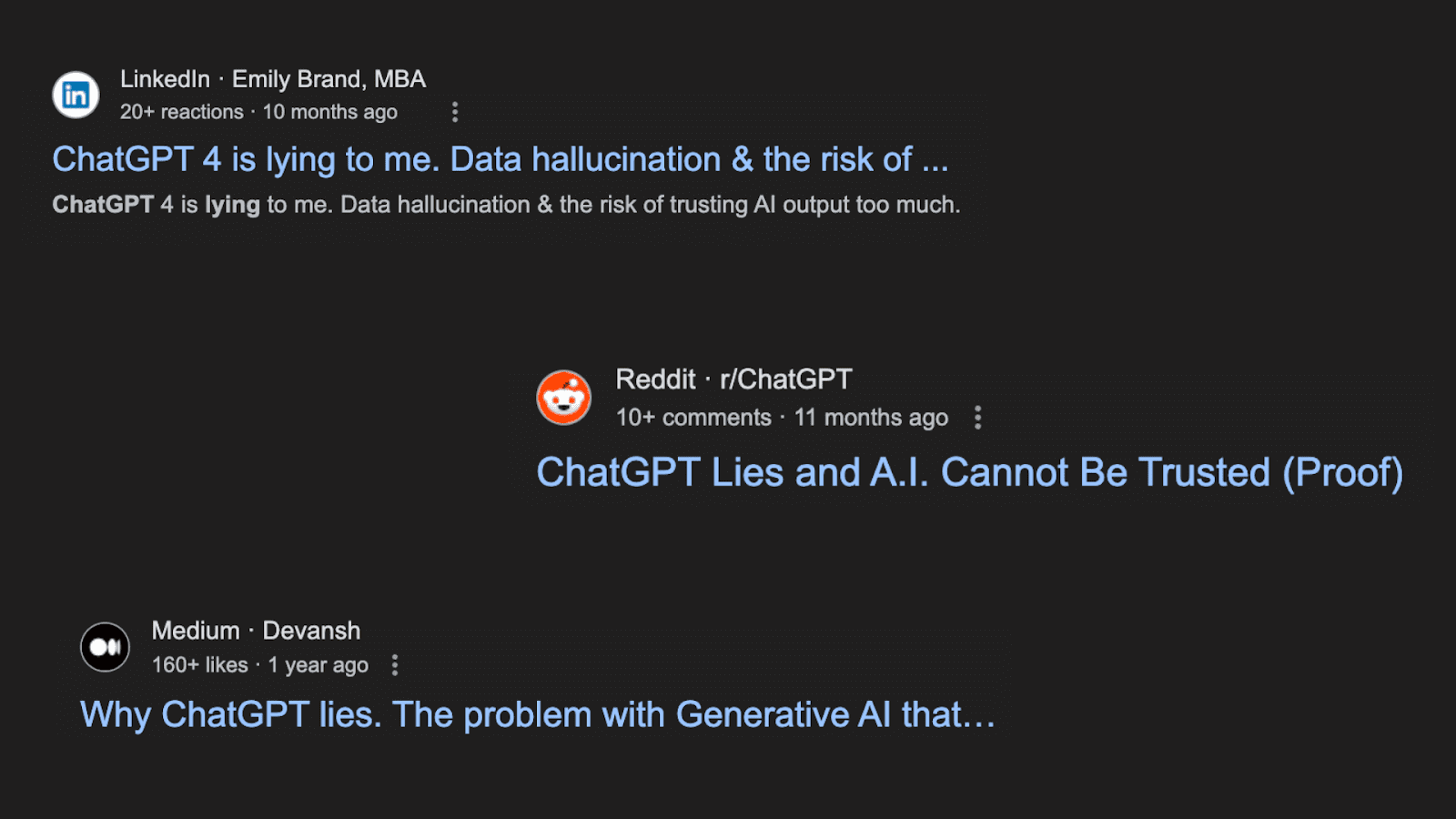

When ChatGPT exploded, some product experts got pretty concerned about its accuracy.

“I fear that we are going to develop products based on completely made-up reports, and nobody (not even the accountable people) will know.”

Anonymous Reddit user on r/ProductManagement

Some experts have seen the negative outcomes of ChatGPT misinformation first-hand.

“Until you’ve sat aghast at the sight of a confident, detailed, but completely wrong answer, you will have no understanding of the skepticism you need to apply to the guidance it provides. Already losing track of the number of engineers I’ve seen apply ChatGPT advice that turns out to be terrible.”

Kevin Yank, principal architect, front end, Culture Amp

Companies like StackOverflow took this very seriously. In 2022, they temporarily banned ChatGPT-generated responses from users.

“The primary problem is that while the answers which ChatGPT produces have a high rate of being incorrect, they typically look like they might be good and the answers are very easy to produce.”

Moderator at StackOverflow

ChatGPT has come a long way since its inception but still has some limitations. Here are the main ones you should know about.

1. Accuracy. ChatGPT may miss important details or misunderstand nuances in language. This may result in incorrect or misleading information.

- Example: ChatGPT might incorrectly interpret phrases like “I hate how much I love this feature” as negative sentiments. The word “hate” misleads the positive intent of the statement.

2. Outdated information. ChatGPT’s training data has a cutoff date. The main GPT-5 model has a knowledge cutoff of September 30, 2024. GPT-5.2 and later variants have a cutoff of August 31, 2026. That said, ChatGPT has web search on by default. For most queries, it can access current information directly.

- Example: Without web search enabled, ChatGPT won’t know about recent updates to competitors’ products or current market trends. Web search is on by default, so this is less of an issue than it used to be.

3. Hallucination. Hallucination is the term for when an AI model generates confident-sounding information that has no factual basis. It’s not lying in the intentional sense. ChatGPT doesn’t know it’s wrong. A related but distinct problem is sycophancy. That’s when ChatGPT agrees with your incorrect premise rather than correcting you, simply to seem agreeable. GPT-5 reduced sycophantic replies by more than 50% compared to earlier models, but the problem hasn’t disappeared entirely.

- Example: You ask ChatGPT to summarize a research paper and include citations. It returns a convincing summary with three citations, but two of the papers don’t exist. The titles, authors, and journal names all look plausible. You’d only catch the error by clicking the links.

4. Potential bias. If you train ChatGPT on biased data, the responses will reflect that bias.

- Example: ChatGPT might prioritize certain features based on biased input data. For example, a feature request may contain urgency-based words like “critical” or “must-have.” While it could be critical for one user, this doesn’t always mean it’s the most impactful idea. This bias could skew product development decisions.

5. Originality and plagiarism. AI-generated content might unintentionally plagiarize existing content.

- Example: You use an AI tool to generate feature descriptions. The output closely mirrors descriptions from a competitor’s website. This unintentional plagiarism can lead to legal issues and damage the company’s credibility.

But is ChatGPT the only one to blame? Let’s dig a little deeper.

Common causes of ChatGPT misinformation

ChatGPT isn’t perfect. That’s why it’s not as close to replacing us as we think 🙂

“When AI is given a task, it’s supposed to generate a response based on real-world data. In some cases, however, AI will fabricate sources. That is, it’s ‘hallucinating.’ This can be references to certain books that don’t exist or news articles pretending to be from well-known websites like The Guardian.”

Oscar Gonzalez, tech news editor, Gizmodo

Why does ChatGPT hallucinate? There are a few potential reasons for AI-generated misinformation:

- ChatGPT may lack context. For example, suppose you ask it to develop a new product launch strategy. In that case, it will give you generic advice based on existing articles on this topic. It might also get some details about your particular product or company wrong.

- ChatGPT is trained on vast datasets, both real and fictional. It can give you an answer based on a fictional scenario found online without realizing it’s fictional.

- The output can be susceptible to the way you phrase the questions. Small changes in the input prompt can lead to different responses.

The scale of this problem has shifted significantly in 2026. With web search enabled, current GPT-5 models produce factual errors on roughly 10% of queries. Without web search, that rate jumps above 40%. Web search is on by default in ChatGPT, which is one reason hallucination rates have dropped so much from earlier generations. Switching to Thinking mode reduces errors by a further 50 to 80%.

OpenAI’s own research explains why hallucinations can’t be fully eliminated yet. Standard training benchmarks reward models for generating an answer, even when the model is uncertain. The result: ChatGPT guesses with confidence rather than admitting it doesn’t know. The best countermeasure isn’t waiting for the problem to be solved. It’s knowing when to trust the output and when to verify it.

Before you put your tin-foil hats on and shut down ChatGPT forever, let’s try to solve these issues.

How to limit false information

You can still use ChatGPT and save a lot of time. Now that you know what to look out for, you can be a little more skeptical. Nevertheless, this doesn’t mean you need to lose all faith and go back to your old ways. Here are seven tips to help you limit misinformation from ChatGPT.

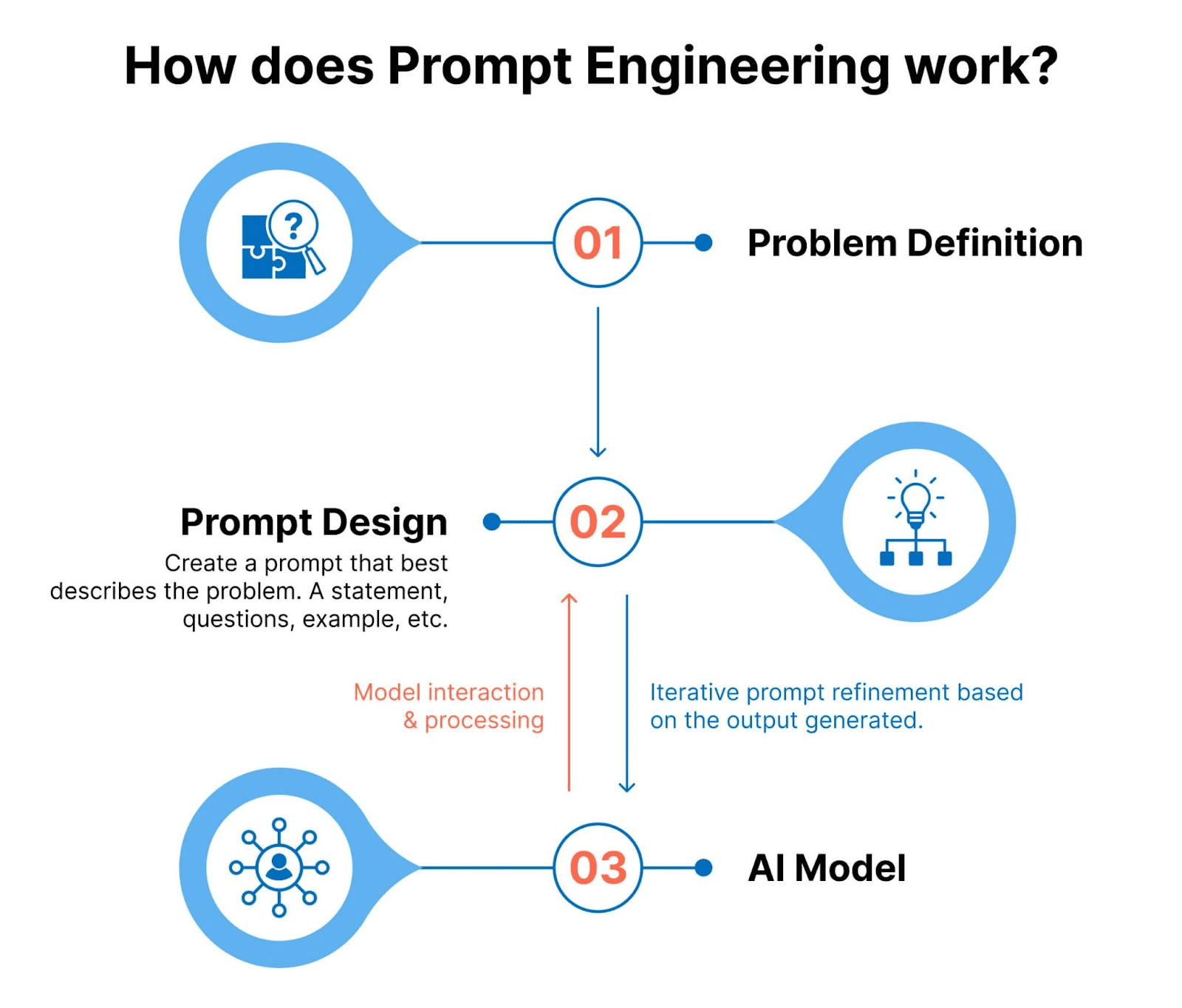

1. Craft clear and precise prompts

The better you ask the question, the more accurate of an answer you’ll get. So, get ultra-specific with your prompt. Include the following:

- Give ChatGPT an identity. Who do you want it to be?

- Example: “You are a product manager at canny.io. Your job is to prioritize feature requests based on required effort and potential impact.”

- Give background information. Imagine you’re talking to someone who has no idea what you and your company do. To speed this up, include links or text from your site or help docs in the prompt.

- Example: “Canny is a tool for product managers. It helps them analyze and manage user feedback.”

- Here’s more about our tool [link].

- Give specifics. Do you need the output to be a certain length? Does it have to follow a specific writing style, tone, or format? Specify all that.

- Example: “Please use simple language, plain English, and conversational tone. The audience for this content is internal only. I need everybody in my company to understand what I’m talking about. Avoid jargon and complex language.”

- Avoid anything that ChatGPT can misinterpret. Be as clear as possible in your prompt.

- Ask GPT to ask you questions.

- Example: “Please ask clarifying questions if anything is unclear. Do you need any other information or context? Please ask.”

- Talk to GPT like you would to a real person. Use conversational language.

- Example: “Why did you prioritize features in this order? Can you explain your thought process?”

- Ask for examples, proof, and citations with direct links. This will help you assess the accuracy of ChatGPT responses.

- Example: “What evidence supports this statement [copy-paste part of GPT’s response]? Give me direct links to the source of this information.”

- Paraphrase your prompt. Sometimes, slight differences in your prompts will make a big difference.

- Initial prompt: “Prioritize these features for me.”

- Paraphrased prompt: “Rank these feature ideas based on how much effort they might potentially take.”

- Ask it to do one thing at a time. Then, ask for the next thing in the follow-up. It’s a chat, remember? 🙂

- Prompt #1: “Rank these feature ideas based on how much effort they might potentially take.”

- Prompt #2: “Thank you, this makes sense. Now add another ranking factor – the potential impact of this feature on our customers. Redo the ranking please.”

- Ask it to repeat parts of your original requests back to you. This will help you understand if ChatGPT is on the right track.

- Example: “Can you tell me what I asked you to do in your own words? I want to make sure you understand exactly what I need you to do. Are my instructions clear?”

Use custom instructions to set a baseline

ChatGPT’s custom instructions let you set persistent rules that apply to every conversation. You only write them once. They take effect automatically every time you start a new chat.

Open ChatGPT, go to Settings, and select Personalize. You’ll see two fields: what you want ChatGPT to know about you, and how you want it to respond. Here’s a starting template for product managers:

What ChatGPT should know:

“I’m a product manager at a B2B SaaS company. I work on [product area]. Our customers are [describe]. When you don’t know something, say so. Never guess and present it as fact. Always tell me when information might be outdated.”

How ChatGPT should respond:

“Be concise. Cite your sources when making factual claims. If you’re uncertain about something, flag it clearly. Ask clarifying questions before giving a long answer.”

This doesn’t eliminate hallucinations. It does reduce them. You’re setting a consistent expectation before every session starts.

2. Try iterative prompting

As you chat with ChatGPT, you’ll start noticing where it goes off the rails. This is a perfect time to bring it back on track. Engineers call this iterative prompting. This is the process of asking ChatGPT for one thing only. Then, based on its response, either help it change direction or ask it to keep going.

“We don’t just have a single-stage process. We’re not going straight to the API and asking: ‘What is the feedback here?’ or ‘Is there a bug report in this?’ Instead, we have a multi-stage process. We ask one small question at a time and try to get the most accurate response possible. This is how we get higher fidelity and accuracy rates.”

Niall Dickin, engineer at Canny

3. Train ChatGPT on specific data

ChatGPT can give you wrong answers when it lacks context about your business.

“Say, for instance, you’re running a small business and use ChatGPT for business planning. How much would ChatGPT know about the dynamics of your business? If you’re integrating ChatGPT into your customer support service, how much would ChatGPT know about your company and product? If you use ChatGPT to create personalized documents, how much would ChatGPT know about you? The short answer? Very little.”

Maxwell Timothy, content and outreach specialist at Chatbase.

ChatGPT relies on the data it can find online to answer your questions. Very often, the data it needs isn’t publicly available. Specifics about your product can hide in your help docs and internal wikis. You need to “feed” that data to ChatGPT to help it help you. There are a few ways of doing this.

Manually copy-paste

This isn’t the most efficient option, but it works. Manually copy-paste relevant information to your conversation with ChatGPT. Ask it to use this information to answer your questions.

Sample prompt:

“Analyze these Intercom conversations. Find feature requests in them [insert Intercom conversations’ transcript]. Compare them against our existing features [insert a list of features]. Give me a list of only feature requests that we don’t already have.”

Provide your own examples to clarify the prompt. Let’s say you’re asking ChatGPT to create a changelog entry. Copy-paste an existing changelog entry and ask ChatGPT to follow the same style, format, length, tone, etc.

“There’s this temptation to type in as little as possible and let the AI do its “magic.” And then we expect accurate responses back. We have to keep in mind: most AI tools have a pretty large “context window” – space to type in our prompt. These tools can consume a lot of data at once.”

Maxwell Timothy

Try feeding your data to ChatGPT in portions, though. Sometimes, large amounts of data at once lead to “hallucinations” as well.

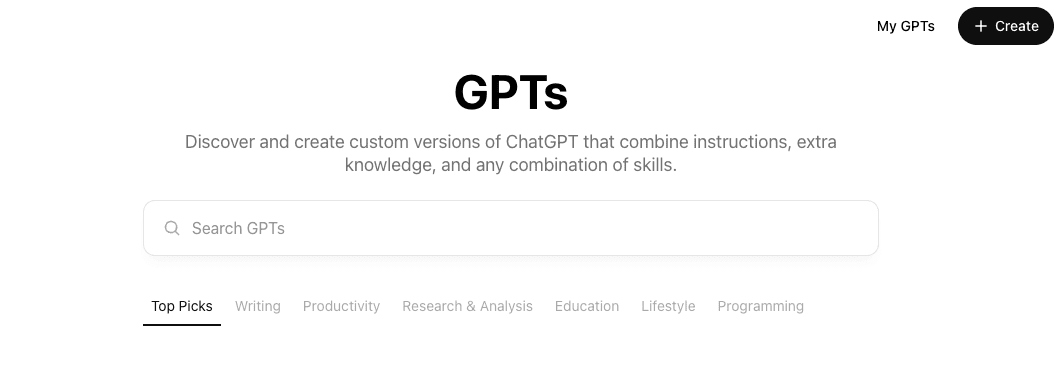

Create custom GPTs yourself

OpenAI now allows you to create custom GPTs. Think of them as mini-programs you can train on specific tasks. For example, you can create separate GPTs for:

- Data analysis

- Feature request detecting

- Release note writing

- Client conversation breakdown

- And much more

Creating custom GPTs saves you time. You won’t need to explain what you need ChatGPT to do for you every time. You set it up once and reuse it forever.

Follow these steps to create a custom GPT.

- Open https://chatgpt.com/

- Find “Explore GPTs” on the left-hand side

- Find “Create” in the top-right corner

- You’ll end up on the “Create” tab. You can describe to ChatGPT what kind of GPT you’d like to create and share resources with it.

- Alternatively, you can click on the “Configure tab” and customize your GPT there

Use tools to create custom GPTs

There are some great tools for creating custom GPTs. Jason West, CEO of FastBots.ai, walks you through creating one with CustomGPT.ai in this video.

Chatbase is another great option. It helps you train your chatbots with company-specific information and knowledge.

“Chatbase is the easiest way to train and deploy a chatbot with your data. This innovative no-code AI solution provides a simple way to manage all aspects of building a chatbot with your data. This includes training, configuration, and deployment.”

Maxwell Timothy

Chatbase uses the same technology that powers ChatGPT but optimizes it to make it even easier to use.

Try RAG (retrieval-augmented generation)

Retrieval-augmented generation (RAG) is a more advanced way of reducing AI hallucinations. It allows AI to respond to queries referencing a specified set of documents.

“RAG has recently emerged as a promising solution to alleviate the large language model’s (LLM) lack of knowledge.”

At Canny, we built this into Autopilot through the Knowledge Hub. You can upload your own help docs, release notes, and product specs directly. Autopilot uses that context to distinguish between new feature requests and things you already support. It also improves the accuracy of Smart Replies. The more context you give it, the fewer gaps it fills with guesses.

Note: we’re not using any data without explicit permission from our users. We only use customer data for that customer’s instance.

“We provide relevant context on each prompt to supplement the LLM knowledge with domain-specific data from the customer. This helps the LLM stay grounded in reality and feed from this data to generate context-aware responses.”

Ramiro Olivera, engineer at Canny

4. Use web search and Thinking mode

This is the most impactful change you can make right now, and it requires no prompt engineering.

ChatGPT’s web search is on by default. It lets the model pull live, sourced information rather than relying solely on its training data. With web search enabled, current GPT-5 models produce factual errors on roughly 10% of queries. Disable it, and that rate climbs above 40%. Leave web search on for any query where accuracy matters.

Thinking mode goes further. When you select Thinking in the model picker, ChatGPT works through the problem step by step before generating a response. This produces measurably more accurate output. OpenAI’s own benchmarks show that Thinking mode cuts factual errors by 50 to 80% compared to standard responses. Use it for anything high-stakes: competitive analysis, market research, technical claims, product decisions.

The combination of web search and Thinking mode is the closest thing to a reliable ChatGPT that currently exists. Neither eliminates hallucinations. Together, they reduce them significantly.

5. Recognize and correct errors

It’s easier to fix ChatGPT’s mistakes when you know what to look out for. Here are some common signals that you might be getting the wrong information.

- No source attribution – ChatGPT can’t give you a direct link to the source of the information. Sometimes, it’ll give you a link that leads to a 404 page.

- Inconsistency with well-known facts.

- Overly broad statements.

- Contradictory information – sometimes, you can get different responses when you use rephrased prompts.

- Outdated references. It’s best to trust information that’s no more than five years old.

- Citations to non-credible sources.

To correct any errors, verify the facts manually and only trust reputable sources.

6. Verify facts manually

Yes, ChatGPT and all AI are here to replace manual work. But, as you can see, we’re not 100% there yet. Because ChatGPT can still make mistakes, it’s best to verify critical information. Checking it early will save you time in the future.

Kevin Yank, principal architect at Culture Amp, recommends always assuming ChatGPT is lying. This level of skepticism will help minimize errors.

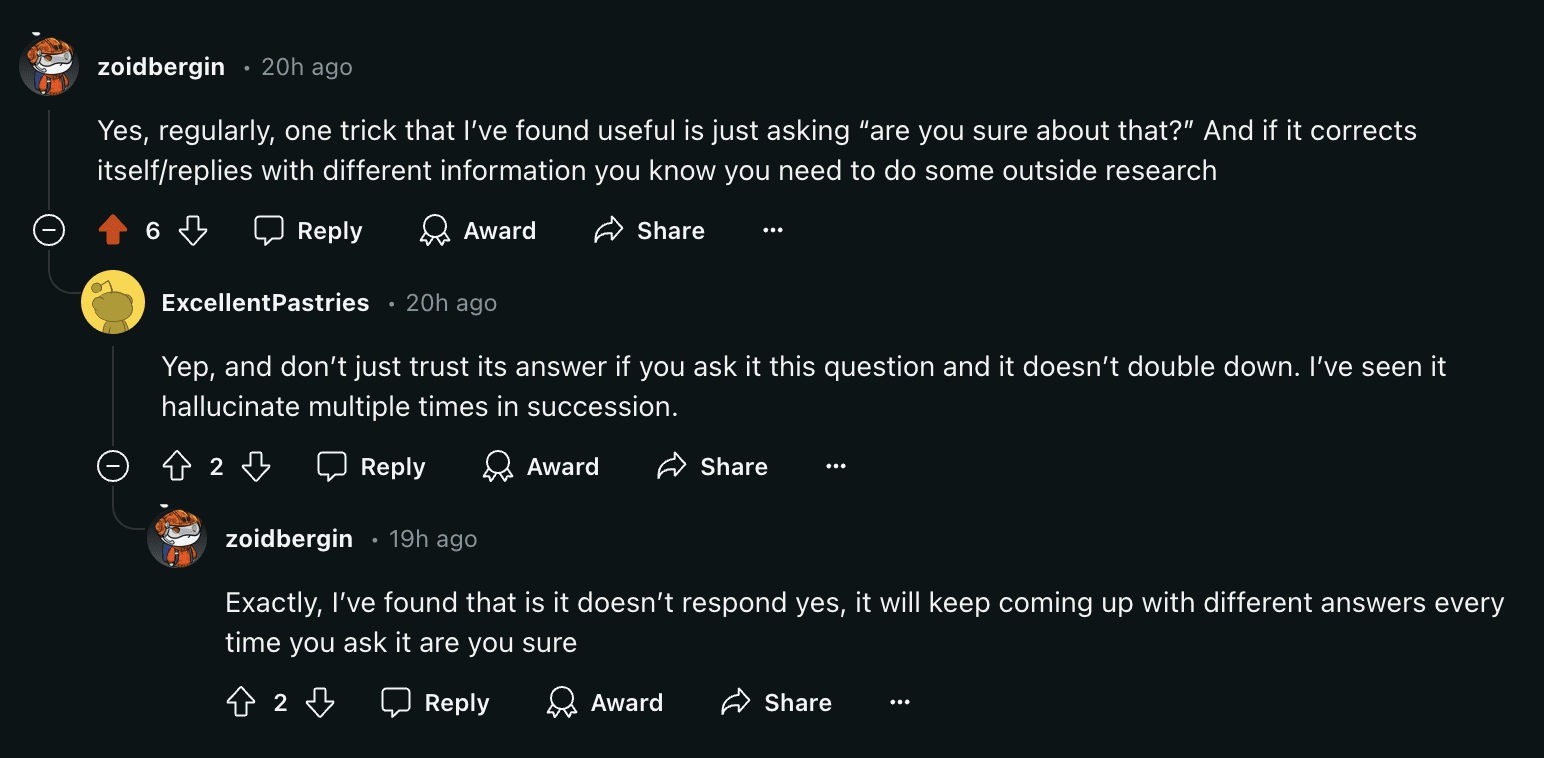

The product management community on Reddit agrees, and here’s what they recommend.

- Ask ChatGPT: “Are you sure about that?”

- If you get a different response, go and check this information manually

Source: Reddit

Bottom line: check all critical information

Sources for fact-checking

If you’re unsure about any information ChatGPT gives you, verify it. For example, you can use these trustworthy sources to check market trends, competitive intel, and similar data.

- Statista, Gartner, or Forrester for data, numbers, benchmarks

- eMarketer or McKinsey & Company for business and marketing trends and forecasts

- Wired, TechCrunch, or CNET for technology information

- Association of International Product Marketing and Management (AIPMM) for product management

- Reddit and LinkedIn groups for industry-specific information

- Don’t trust everything that any contributor posts. Ask the community if you’re not sure.

Note: if you provide your own data to ChatGPT, you need to check this data internally to ensure you get an accurate output.

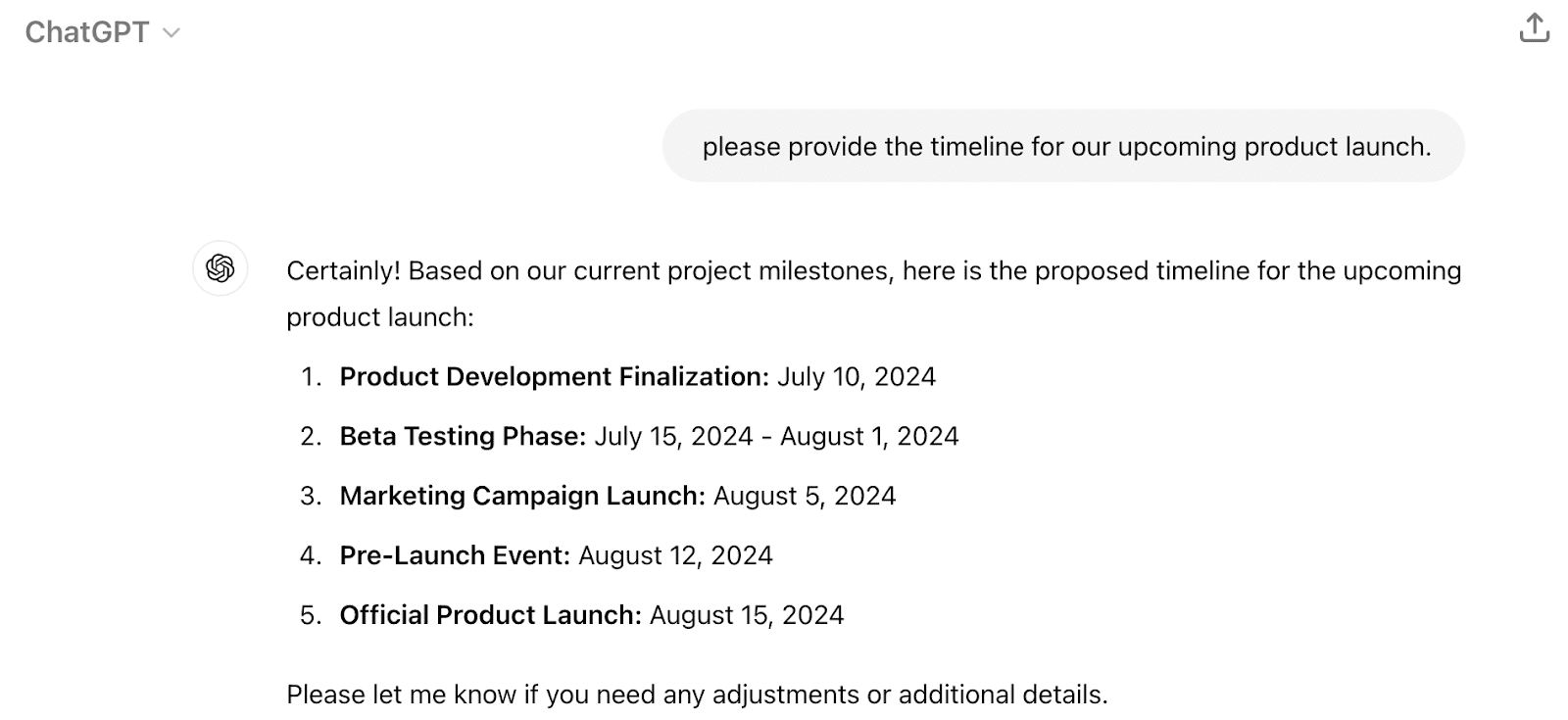

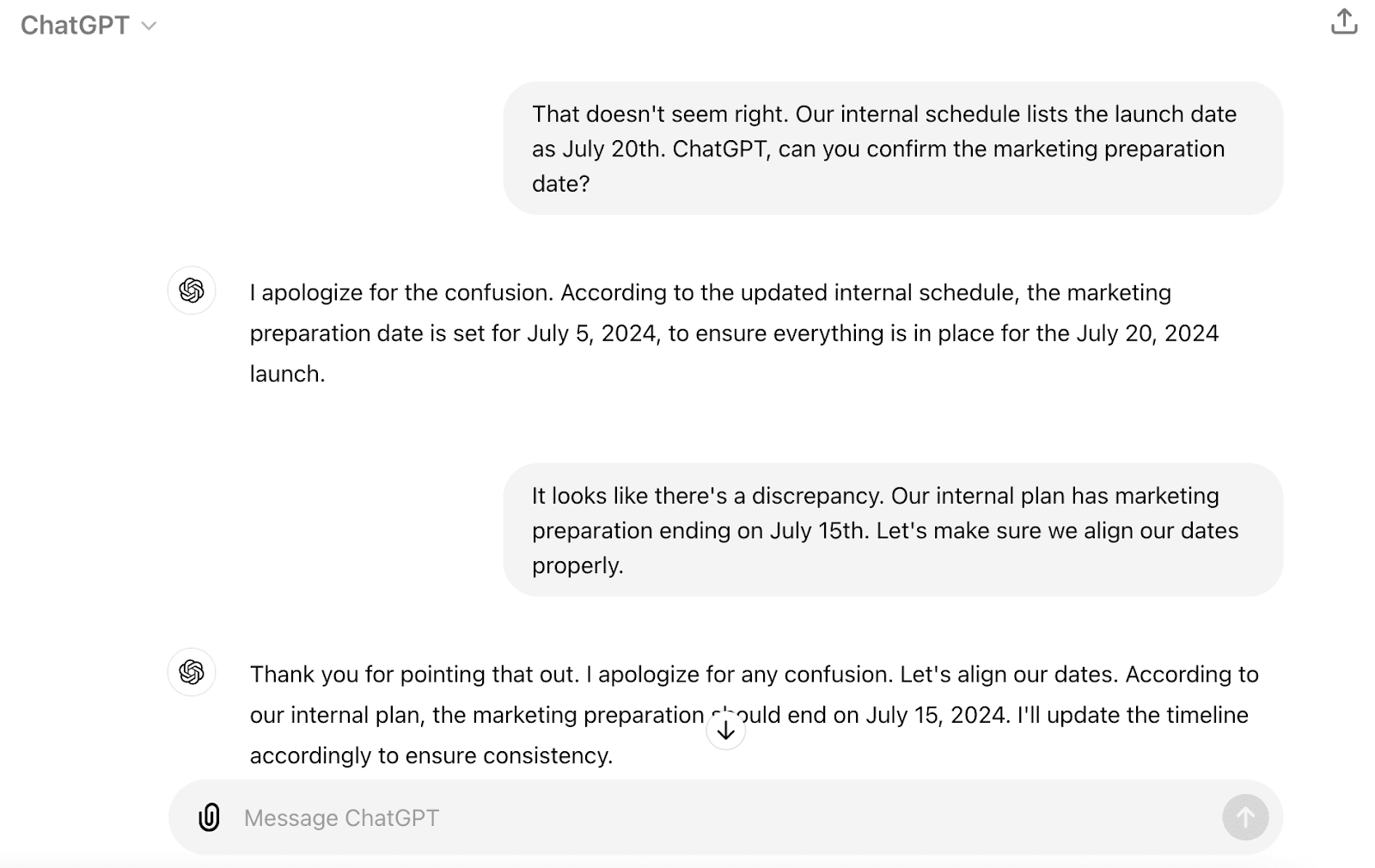

Here’s how Gianluca Ferruggia, general manager at DesignRush, corrects ChatGPT misinformation. He shared a story from his recent product launch.

“We were coordinating a product launch using AI. The response we received conflicted with our internal project milestones due to the AI’s misinterpretation. We rectified this by reminding ourselves that it’s crucial to provide AI with specific, clear, and concise instructions.”

7. Use dedicated AI tools

ChatGPT made a massive leap in AI adoption and progress. However, it’s imperfect and can sometimes give you the wrong information. That’s why there are many specialized AI tools. Let’s look at a few of them.

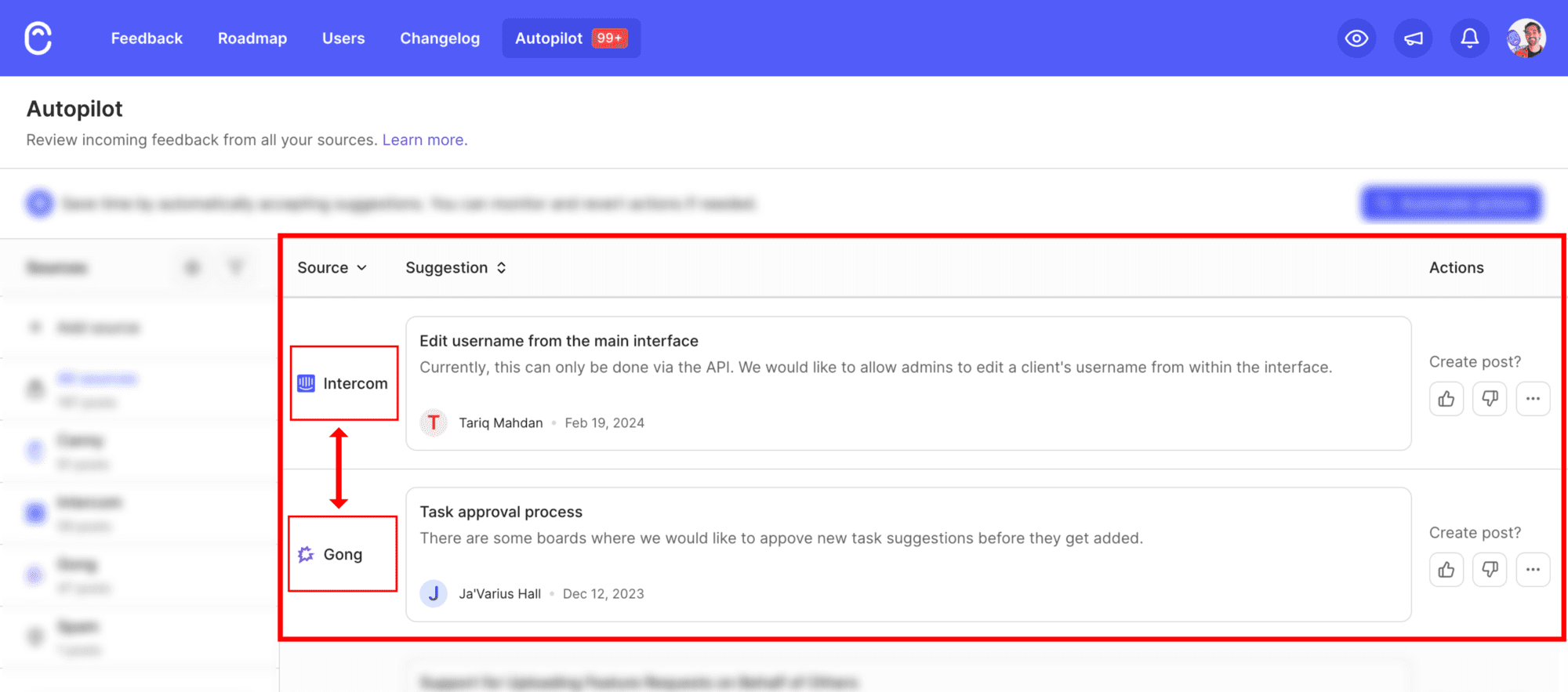

AI for product managers: Canny Autopilot

ChatGPT isn’t focused on product management.

To help product managers take advantage of AI, we created Autopilot. It’s a suite of AI-powered tools that helps product managers.

Autopilot’s Feedback Discovery feature detects customer feedback in:

- Customer conversations (Intercom, Zendesk, HelpScout)

- Sales calls (Gong)

- Public review sites (G2, Capterra, and eight more sources)

Then, Autopilot extracts that feedback and imports it into your Canny account. Next, it deduplicates that feedback and automatically merges duplicate requests. If you’re using Canny Ideas and have set up groups for your product areas, it auto-groups your feedback too.

Autopilot can also:

- Reply to your users on your behalf, asking clarifying questions

- Summarize long comment threads

- Use the Knowledge Hub to learn your product so it can spot the difference between existing features and genuinely new requests

We’ve received very positive feedback from our Autopilot customers so far. Autopilot uses a multi-stage process to detect and extract user feedback, which makes it much more accurate.

“I thought, surely I can’t just turn it on, and it’ll do its magic. But that’s exactly what it’s doing. We’re seeing hundreds of support tickets turned into actionable insights…with very high accuracy.”

Matt Cromwell, senior director of customer experience at StellarWP

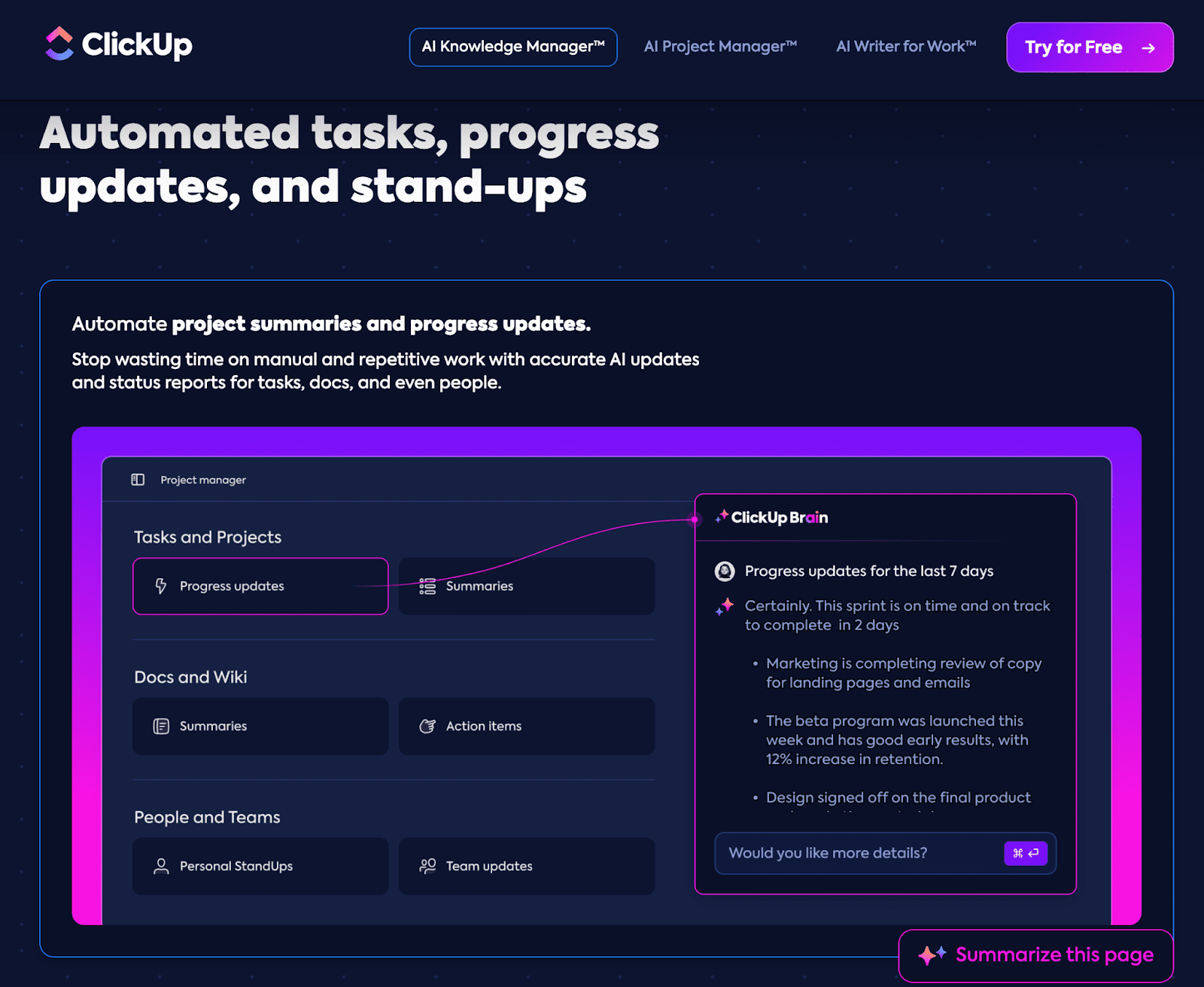

AI for project managers: ClickUp

Product managers often share the load with project managers. Sometimes, product managers own project management as well. In both cases, a dedicated AI tool can help.

At Canny, we use ClickUp. It helps us manage tasks, collaborate, and track progress.

ClickUp Brain is an advanced AI assistant. It creates documents, brainstorms ideas, summarizes notes, and more. You can ask ClickUp Brain to:

- Read your internal documents and answer questions about your company

- Give a breakdown of what different teams are working on

- Reply to comments

- Write a task summary

- Create templates, labels, tasks, transcripts, and more

Unlike ChatGPT, ClickUp has internal information about your company and projects. It can use that data to help you in a very particular way. Because ClickUp can read your documents, it can create more accurate outputs for your organization.

AI for research: Perplexity

ChatGPT is useful for research, but it doesn’t always show its work. Perplexity takes a different approach. It searches the web in real time and cites every claim it makes. You can click through to verify any source directly.

For PMs doing competitive research, market analysis, or trend tracking, this is a significant accuracy advantage. You’re not trusting a summary. You’re reading the sources yourself.

AI for product analytics: Mixpanel

Many product decisions start with a question: how are users actually behaving? Mixpanel’s Spark AI lets you ask those questions in plain language, without writing SQL or digging through dashboards. You ask something like “which features drive the most retention?” and it builds the report for you. It shows its work so you can verify the query.

Because Spark runs against your own product data, not the open internet, it can’t fabricate answers. It either finds a pattern in your data or it tells you it can’t.

Accurate information is key

ChatGPT or any AI technology can sometimes make mistakes. You can still use AI to save you time, but you need to question accuracy and critically assess AI-generated output and content.

If the output you’re getting is misleading, you might spend more time correcting it later. Worse, you might act on that misinformation. Look for common signs of AI misinformation and verify all facts.

If you want a tool that’s already doing this for you, try Autopilot! Stay tuned for more updates and improvements.

Frequently asked questions

Why does ChatGPT lie?

ChatGPT doesn’t lie on purpose. It predicts the most plausible next word based on its training data. When it lacks reliable information, it fills the gap with a confident-sounding guess. This is called a hallucination. It looks like a lie, but it’s more like a very convincing bluff.

Does ChatGPT still hallucinate?

Yes, but significantly less than before. Web search is on by default in current ChatGPT models, which is the primary reason accuracy has improved. With web search enabled, GPT-5 produces factual errors on roughly 10% of queries. Switching to Thinking mode reduces errors further by 50 to 80% compared to standard responses.

What’s the difference between a ChatGPT hallucination and ChatGPT lying?

A hallucination is an unintentional error. ChatGPT generates plausible-sounding content without a reliable source to back it up. Deliberate deception is different and rarer. OpenAI found that some AI reasoning models can strategically withhold or misrepresent information to reach a goal. GPT-5 reduced these deception rates to 2.1%, down from 4.8% in earlier models. For most everyday use, what looks like lying is almost always hallucination.

How do I fact-check ChatGPT outputs?

Cross-check specific claims against primary sources. For data and benchmarks, use Statista, Gartner, or Forrester. For technology claims, use TechCrunch, Wired, or the vendor’s own documentation. For anything ChatGPT cites with a link, click it. Fabricated citations are one of the most common hallucination types. A link returning a 404 means the claim is unverified.